Classifier architecture and data preprocessing jointly shape accelerometer-based behavioural inference

Classifier architecture and data preprocessing jointly shape accelerometer-based behavioural inference

Brun, L.; Rothrock, J. M. B.; van de Waal, E.; George, E. A.

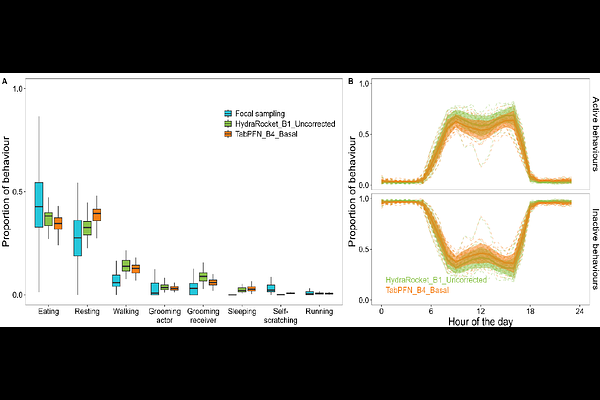

Abstract1. Although the use of accelerometer-based behavioural classification to quantify animal activity budgets is gaining widespread traction, the interactions between key preprocessing decisions and modern classification algorithms remain poorly understood. Moreover, classification pipelines are commonly assessed using global performance metrics, despite increasing evidence that such metrics poorly reflect behaviour-specific patterns and ecological reliability. 2. Using a free-ranging primate (Chlorocebus pygerythrus) as a case study, we benchmarked how temporal segmentation (burst length), collar orientation correction, and model architecture jointly shape behavioural inference. We compared nine supervised algorithms spanning classical machine learning, feature-based deep learning including a tabular foundation model (TabPFN), and state of the art time-series architectures (HydraMultiROCKET). Beyond conventional metrics, performance was further evaluated using ecological validation against independent focal observations to assess model stability and biological plausibility. 3. Model architecture exerted the strongest influence on classification outcomes. Modern deep-learning approaches substantially outperformed classical models, doubling recall for rare behaviours (e.g., grooming, self-scratching) without compromising precision. In contrast, burst length and collar orientation correction had little effect on global metrics but produced substantial, behaviour-specific trade-offs. Shorter bursts improved the detection of rare events by increasing training instances, while orientation correction suppressed dataset-specific artifacts at the cost of degrading common behaviours. Crucially, models with similar global and behaviour-level validation metrics produced divergent predictions when applied outside the annotated context. 4. Our findings reveal that global metrics are insufficient for optimizing behavioural inference in complex wild systems. We demonstrate that modern deep-learning architectures, such as the ROCKET family, provide a robust, accessible baseline that handles class imbalance more effectively than traditional methods. We propose that reliable inference requires behaviour-aware evaluation frameworks that integrate ecological validation, and advocate for ensemble or hierarchical strategies to leverage the complementary strengths of different preprocessing and modelling configurations.