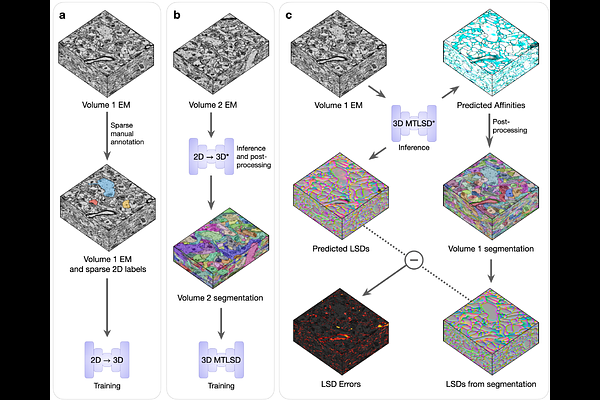

A deep learning-based strategy for producing dense 3D segmentations from sparsely annotated 2D images

A deep learning-based strategy for producing dense 3D segmentations from sparsely annotated 2D images

Thiyagarajan, V. V.; Sheridan, A.; Harris, K. M.; Manor, U.

AbstractProducing dense 3D reconstructions from biological imaging data is a challenging instance segmentation task that requires significant ground-truth training data for effective and accurate deep learning-based models. Generating training data requires intense human effort to annotate each instance of an object across serial section images. Our focus is on the especially complicated brain neuropil, comprising an extensive interdigitation of dendritic, axonal, and glial processes visualized through serial section electron microscopy. We developed a novel deep learning-based method to generate dense 3D segmentations rapidly from sparse 2D annotations of a few objects on single sections. Models trained on the rapidly generated segmentations achieved similar accuracy as those trained on expert dense ground-truth annotations. Human time to generate annotations was reduced by three orders of magnitude and could be produced by non-expert annotators. This capability will democratize generation of training data for large image volumes needed to achieve brain circuits and measures of circuit strengths.