BOTANIC-0: a series of foundation models for plant genomic data

BOTANIC-0: a series of foundation models for plant genomic data

Ogier du Terrail, J.; Marchand, T.; Cabeli, V.; Khadir, Z.; Veran, C.; Strouk, L.

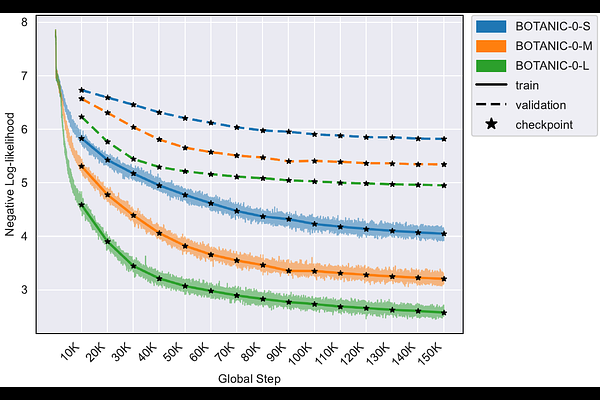

AbstractGenomic language models (gLMs) have emerged as a powerful paradigm for learning regulatory biology directly from DNA sequence. Here, we introduce Botanic0, a family of plant genomic foundation models spanning 100M to 1B parameters and pretrained on 43 phylogenetically diverse plant genomes. The Botanic0-S, Botanic0-M, and Botanic0-L models form the first generation of a long-term research initiative, dedicated to advancing crop improvement research, genotype-to-phenotype modeling, and sequence-based genome editing. The architecture, pre-training pipeline and pre-training dataset of Botanic0 follow the seminal work of [1]. Across a broad suite of genomic and genetic prediction tasks, including regulatory element annotation, gene expression inference, and variant effect prediction, Botanic0 models achieve performance competitive with state-of-the-art foundation models, both in zero-shot settings and after fine-tuning. Scaling analyses reveal consistent improvements in predictive power with increased model capacity, highlighting the benefits of large-model pretraining for plant genomics. This work establishes our ability to train foundation models at scale, and lays the foundation for the next generations of models to come. To support reproducible research and community benchmarking, we release all Botanic0 models at https://huggingface.co/living-models/models.