The Combinatorial Capacity and Robustness of Hierarchical Concept Coding in the Human Medial Temporal Lobe

The Combinatorial Capacity and Robustness of Hierarchical Concept Coding in the Human Medial Temporal Lobe

Cao, L.

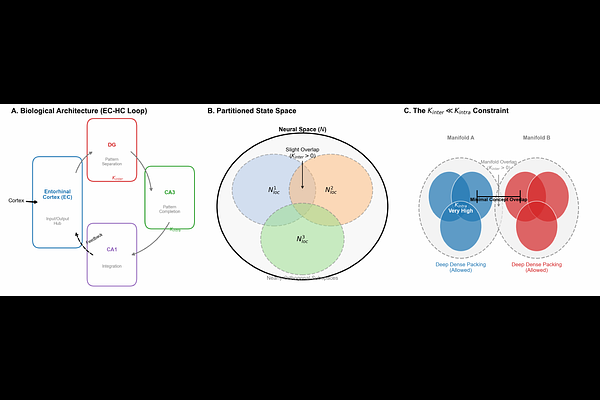

AbstractThe human brain encodes a virtually infinite repertoire of semantic concepts using a finite number of neurons, a feat that defies the capacity limits of classical attractor networks. While concept cells in the medial temporal lobe (MTL) exhibit extreme sparsity, the information-theoretic principles governing their collective robustness remain elusive. Here, we present a hierarchical coding framework that resolves this paradox. We rigorously prove that while uniform sparse coding is bound by a polynomial exclusion volume limit, leading to catastrophic interference, a hierarchically partitioned architecture unlocks an exponential combinatorial capacity. We demonstrate that this locally dense, globally sparse topology mirrors the distance-dependent connectivity of the hippocampal CA3 region. Crucially, we derive a Supply-Demand theoretical model for Cognitive Reserve, which quantitatively predicts the Silent Phase of neurodegeneration and the mathematical inevitability of the clinical Cliff Edge collapse in Alzheimer Disease. Furthermore, our findings identify the lack of topological sparsity as the root cause of catastrophic forgetting in artificial neural networks, offering a blueprint for next-generation, biologically plausible AI architectures.