ALABI: Active Learning for Accelerated Bayesian Inference

ALABI: Active Learning for Accelerated Bayesian Inference

Jessica Birky, Rory K. Barnes

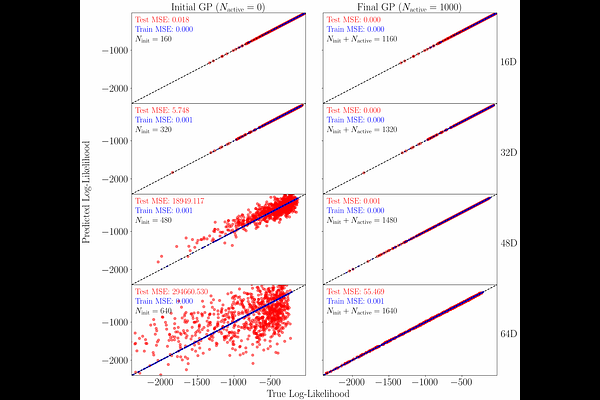

AbstractWe present Active Learning for Accelerated Bayesian Inference (\texttt{alabi}): an open-source Python package for performing Bayesian inference with computationally expensive models. Given a forward model and observational data to construct a likelihood and priors, \texttt{alabi}\ uses a Gaussian Process (GP) surrogate model trained to predict posterior probability as a function of input parameters, and employs active learning to iteratively improve GP predictive performance in high-likelihood regions where the GP is most uncertain. \texttt{alabi}\ provides a uniform interface for using Markov chain Monte Carlo (MCMC) with different packages, including the affine-invariant sampler \texttt{emcee}, and nested samplers \texttt{dynesty}, \texttt{multinest}, and \texttt{ultranest}. This approach facilitates accurate estimation of the desired posterior distribution, while reducing the number of computationally expensive model evaluations required by factors of thousands. We demonstrate the performance of \texttt{alabi}\ on a variety of test cases, including where inference is challenging due to complex posterior structure or high dimensionality. We show that \texttt{alabi}\ offers a substantial improvement for likelihood functions with evaluation times $\gtrsim 1$\,s, speeding up MCMC computations by a factor of $10-1000\times$ when tested on problems with up to 64 dimensions.